Transformers revolutionized AI but struggle with long sequences due to quadratic complexity, leading to high computational and memory costs that limit scalability and real-time use. This creates a need for faster, more efficient alternatives.

Mamba4 addresses this using state space models with selective mechanisms, enabling linear-time processing while maintaining strong performance. It suits tasks like language modeling, time-series forecasting, and streaming data. In this article, we explore how Mamba4 overcomes these limitations and scales efficiently.

Background: From Transformers to State Space Models

Sequence modeling evolved from RNNs and CNNs to Transformers, and now to State Space Models (SSMs). RNNs process sequences step by step, offering fast inference but slow training. Transformers introduced self-attention for parallel training and strong accuracy, but at a quadratic computational cost. For very long sequences, they become impractical due to slow inference and high memory usage.

To address these limits, researchers turned to SSMs, originally from control theory and signal processing, which provide a more efficient approach to handling long-range dependencies.

Limitations of Attention Mechanism (O(n²))

Transformers compute attention using an n×n matrix, giving O(n²) time and memory complexity. Each new token requires recomputing attention with all previous tokens, growing a large KV cache. Doubling sequence length roughly quadruples computation, creating a major bottleneck. In contrast, RNNs and SSMs use a fixed-size hidden state to process tokens sequentially, achieving linear complexity and better scalability for long sequences.

- The attention mechanism of transformers needs to evaluate all token pairs which results in a complexity of O(n²).

- The need for a new token requires the complete re-evaluation of earlier attention scores which introduces delay.

- The long KV caches consume excessive memory resources which results in slower generation processes.

For Example:

import numpy as np

def attention_cost(n):

return n * n # O(n^2)

sequence_lengths = [100, 500, 1000, 5000]

for n in sequence_lengths:

print(f”Sequence length {n}: Cost = {attention_cost(n)}”)

Sequence length 100: Cost = 10000

Sequence length 500: Cost = 250000

Sequence length 1000: Cost = 1000000

Sequence length 5000: Cost = 25000000

Run completed in 949.9000000059605ms

This simple example shows how quickly computation grows with sequence length.

What Are State Space Models (SSMs)?

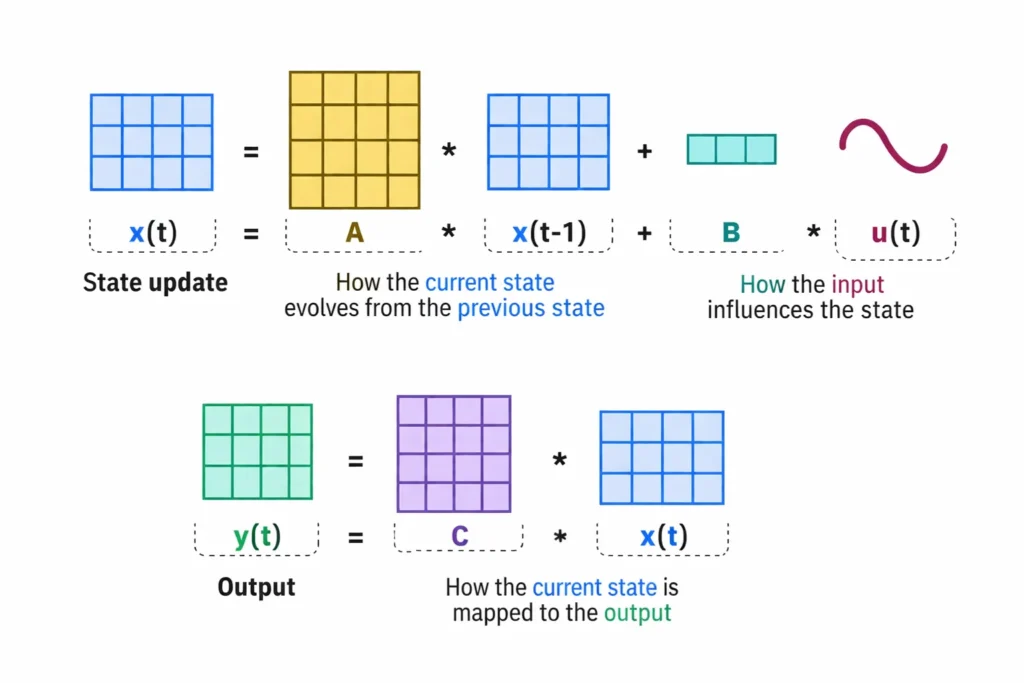

State Space Models (SSMs) offer a different approach. The SSM system tracks hidden state information which changes over time through linear system dynamics. SSMs maintain continuous time operation through differential equations while they execute discrete updates for sequence data according to the following equation:

The equation shows that x[t] represents the hidden state at time t and u[t] functions as the input while y[t] serves as the output. The system generates new output results through its dependency on the previous system state and present system input without requiring access to historical system input data. The system relates back to control systems which developed signal processing techniques. In ML S4 S5 and Mega use structured matrices A B and C for their SSM models to handle extremely long-term dependencies. The system operates on a recurrent basis because the state x[t] contains all past data.

- SSMs describe sequences by linear state updates which control the hidden state movements.

- The state vector x[t] encodes all past history up to step t.

- The widely used SSM system from control theory has found new applications in deep learning to study time-series data and linguistic patterns.

Why SSMs Are More Efficient

Now a question comes to why SSMs are efficient. The design of SSMs requires each update to process only the previous state which results in O(n) time for processing n tokens because every step needs constant time. The system does not develop a larger attention matrix during operation. The SSM can perform computations through the following mathematical expression:

import torch

state = torch.zeros(d)

outputs = []

for u in inputs: # O(n) loop over sequence

state = A @ state + B @ u # constant-time update per token

y = C @ state

outputs.append(y)

This linear recurrence enables SSMs to process extended sequences with efficiency. The Mamba program together with current SSM models use both recurrence and parallel processing methods to speed up their training times. The system achieves Transformer accuracy on extended tasks while requiring less computational power than Transformers. The design of SSMs prevents attention systems from reaching their quadratic performance limits.

- SSM inference is linear-time: each token update is constant work.

- Long-range context is captured via structured matrices (e.g. HiPPO-based A).

- State-space models (like Mamba) train in parallel (like Transformers) but stay O(n) at inference.

What Makes Mamba4 Different

Mamba4 unites SSM strengths with new features. The system extends Mamba SSM architecture through its special input processing selective mechanism. SSM systems retain their trained matrices (A, B, C) in their original state. Mamba enables B and C prediction through its token and batch-based processing system that uses step-size Δ.

The system produces two main advantages through this feature: First the model can focus on the most relevant information for a given input, and another one is it remains efficient because the core recurrence still runs in linear time. The following section presents the main concepts:

Selective State Space Models (Core Idea)

Mamba replaces its fixed recurrence system with a Selective SSM block. The block establishes two new functions that include a parallel scanning system and a process for filtering data. Mamba uses its scanning method to extract essential signals from the sequence and convert them into state signals. The system eliminates unnecessary information while keeping only essential content. Maarten Grootendorst created a visual guide which explains this system through a selective scanning process that removes background noise. Mamba achieves a Transformer-level state power through its compact state which maintains the same state size throughout the process.

- Selective scan: The model dynamically filters and retains useful context while ignoring noise.

- Compact state: Only a fixed-size state is maintained, similar to an RNN, giving linear inference.

- Parallel computation: The “scan” is implemented via an associative parallel algorithm, so GPUs can batch many state updates.

Input-Dependent Selection Mechanism

The selection process of Mamba depends on data which determines the SSM parameters it needs. The model generates B and C matrices and Δ through its computation system for each token that uses the token’s embedding. The model uses current input information to direct its state updating process. Mamba4 provides users with the option to select B and C values which will remain unchanged during the process.

B_t = f_B(input[t]), C_t = f_C(input[t])

The two functions f_B and f_C serve as learned functions. Mamba gains the capability to selectively “remember” or “forget” information through this method. New tokens with high relevance will produce larger updates through their B and C components because their state change depends on their level of relevance. The design establishes nonlinear behavior within the SSM system which enables Mamba4 to handle different input types.

- Dynamic parameters: The system calculates new B and C matrices along with step-size Δ for every user input which enables the system to adjust its behavior during each processing step.

- Selective gating: The state of the model maintains its memory of inputs which have lesser importance while maintaining full memory of inputs which have greater importance.

Linear-Time Complexity Explained

Mamba4 operates in linear time by avoiding full token-token matrices and processing tokens sequentially, resulting in O(n) inference. Its efficiency comes from a parallel scan algorithm within the SSM that enables simultaneous state updates. Using a parallel kernel, each token is processed in constant time, so a sequence of length n requires n steps, not n². This makes Mamba4 more memory-efficient and faster than Transformers for long sequences.

- Recurrent updates: Each token updates the state once which results in O(n) total cost.

- Parallel scan: The state-space recursion uses an associative scan (prefix-sum) algorithm for implementation which GPUs can execute in parallel.

- Efficient inference: Mamba4 inference speed operates at RNN levels while maintaining capacity to capture long-range patterns.

Mamba4 Architecture

The Mamba4Rec system uses its framework to process data through three stages which include Embedding, Mamba Layers, and Prediction. The Mamba layer forms the main element of the system which contains one SSM unit inside the Mamba block and a position-wise feed-forward network (PFFN). The system allows multiple Mamba layers to be combined but one layer usually meets the requirements. The system uses layer normalization together with residual connections to maintain system stability.

Overall Architecture Overview

The Mamba4 model consists of three primary components which include:

- Embedding Layer: The Embedding Layer creates a dense vector representation for each input item or token ID before applying dropout and layer normalization.

- Mamba Layer: Each Mamba Layer contains a Mamba block which connects to a Feed-Forward Network. The Mamba block encodes the sequence with selective SSMs; the PFFN adds further processing per position.

- Stacking: The system permits users to combine multiple layers into one stack. The paper notes one layer often suffices, but stacking can be used for extra capacity.

- Prediction Layer: The system uses a linear (or softmax) head to predict the subsequent item or token after completing the last Mamba layer.

The Mamba layer enables systems to extract local features through its block convolution process while also tracking extended state updates which function like Transformer blocks that combine attention with feed-forward processing methods.

Embedding Layer

The embedding layer in Mamba4Rec converts each input ID into a learnable d-dimensional vector using an embedding matrix. Dropout and layer normalization help prevent overfitting and stabilize training. While positional embeddings can be added, they are less important because the SSM’s recurrent structure already captures sequence order. As a result, including positional embeddings has minimal impact on performance compared to Transformers.

- Token embeddings: Each input item/token ID → d-dimensional vector.

- Dropout & Norm: Embeddings are regularized with dropout and layer normalization.

- Positional embeddings: Optional learnable positions, added as in Transformers. The present system needs these elements because Mamba’s state update already establishes order for processing.

Mamba Block (Core Component)

The Mamba block serves as the main component of Mamba4. The system takes input as multiple vectors which have dimensions of batch and sequence length and hidden dim. The system produces an output sequence which matches the input shape while providing additional contextual information. The system operates through three internal processes which include a convolution operation with its activation function and a selective SSM update process and a residual connection that leads to output projection.

Convolution + Activation

The block first increases its input size before it executes a 1D convolution operation. The code first uses a weight matrix to project input data into a bigger hidden dimension before it processes the data through a 1D convolution layer and then through the SiLU activation function. The convolution uses a kernel which has a size of 3 to process information from a limited area around the current tokens. The sequence of operations is:

h = linear_proj(x) # expand dimensionality

h = conv1d(h).silu() # local convolution + nonlinearity【10†L199-L204】

This enriches each token’s representation before the state update. The convolution helps capture local patterns, while SiLU adds nonlinearity.

Selective SSM Mechanism

The Selective State Space component receives the processed sequence h as its input. The system uses state-space recurrence to generate hidden state vectors at every time step by using SSM parameters which it has discretized. Mamba enables B and C to depend on input data because these matrices together with step-size Δ get calculated based on h at every point in time. . The SSM state update process operates as follows:

state_t = A * state_{t-1} + B_t * h_t

y_t = C_t * state_t

Where A represents a specific matrix which has been initialized using HiPPO methods while B_t and C_t show dependence on input data. The block produces the state sequence output as y. This selective SSM has several important properties:

- Recurrent (linear-time) update: The system requires O(n) time to process new state information which comes from both previous state data and current input data. The state update process requires discretized parameters which research has derived from continuous SSM theory.

- HiPPO initialization: The state matrix A receives HiPPO initialization through a structured process which enables it to maintain long-range dependencies by default.

- Selective scan algorithm: Mamba employs a parallel scan approach to calculate states through its selective scan algorithm which enables simultaneous processing of recurring operations.

- Hardware-aware design: The system implements hardware-aware design by creating GPU-optimized kernels which merge convolution state update and output projection components to reduce memory transfer requirements.

The system implements hardware-aware design by creating GPU-optimized kernels which merge convolution state update and output projection components to reduce memory transfer requirements.

Residual Connections

The block implements a skip connection which leads to its final output after the SSM stage. The original convolution output h is combined with SSM output state after SiLU activation which goes through a final linear layer. . Pseudo-code:

state = selective_ssm(h)

out = linear_proj(h + SiLU(state)) # residual + projection【10†L205-L208】

The residual link supports the model by maintaining fundamental data while it trains in a more consistent manner. The process uses layer normalization as a standard practice which follows the addition operation. The Mamba block produces output sequences which maintain their original shape while introducing new state-based context and preserving existing signals.

Mamba Layer and Feed Forward Network

The Mamba model uses a basic structure where each layer consists of one Mamba block and one Position-wise Feed-Forward Network (PFFN) structure. The PFFN functions as a standard element (used in Transformers) which processes each individual position separately. The system includes two dense (fully-connected) layers which use a non-linear activation function called GELU for their operation.

ffn_output = GELU(x @ W1 + b1) @ W2 + b2 # two-layer MLP【10†L252-L259】

The PFFN first increases the dimensional space before it proceeds to reestablish the original shape. The system enables the extraction of sophisticated relationships between all tokens after their contextual information has been processed. Mamba4 uses dropout and layer normalization for regularization purposes which it implements after completing the Mamba block andFFN process.

- Position-wise FFN: Two dense layers per token, with GELU activation.

- Regularization: Dropout and LayerNorm after both the block and the FFN (mirroring Transformer style).

Effect of Positional Embeddings

Transformers rely on positional embeddings to represent sequence order, but Mamba4’s SSM captures order through its internal state updates. Each step naturally reflects position, making explicit positional embeddings largely unnecessary and offering little theoretical benefit.

Mamba4 maintains sequence order through its recurrent structure. While it still allows optional positional embeddings in the embedding layer, their importance is much lower compared to Transformers.

- Inherent order: The hidden state update establishes sequence position through its intrinsic order, which makes explicit position information unnecessary.

- Optional embeddings: If used, it will add learnable position vectors to token embeddings. This will help in slightly adjusting the performance model.

Role of Feed Forward Network

The position-wise Feed-Forward Network (PFFN) serves as the second sub-layer of Mamba layer. The system delivers additional non-linear processing capabilities together with feature combination abilities after completing context decoding. Each token vector undergoes two linear transformations which use GELU activation functions to process the data.

FFN(x) = GELU(xW_1 + b_1) W_2 + b_2

The process starts with an expansion to a larger inner size which eventually results in a reduction to its original size. The PFFN enables the model to develop understanding of intricate relationships between hidden features which exist at every location. The system requires additional processing power yet it enables more advanced expression capabilities. The FFN component with dropout and normalization in Mamba4Rec enables the model to understand user behavior patterns which extend beyond simple linear movement.

- Two-layer MLP: Applies two linear layers with GELU per token.

- Feature expansion: Expands and projects the hidden dimension to capture higher-order patterns.

- Regularization: Dropout and normalization keep training stable.

Single vs Stacked Layers

The Mamba4Rec platform enables users to select their preferred level of system operation. The core component (one Mamba layer) is often very powerful on its own. The authors found through their research that a single Mamba layer (one block plus one FFN) already provides better performance than RNN and Transformer models which have similar dimensions. The first two layers deliver slight performance improvements through layer stacking, but full deep stacking is not essential. . The residual connections which enable early layer information to reach higher layers are essential for successful stacking implementation. Mamba4 allows users to create models with different depths through its two options which include a quick shallow mode and a deep mode that provides extra capacity.

- One layer often enough: The Mamba system requires only one layer to operate correctly because a single Mamba block combined with an FFN model can effectively track sequence movements.

- Stacking: Additional layers can be added for complex tasks, but show diminishing returns.

- Residuals are key: The process of skipping paths enables gradients to flow through while allowing original inputs to reach higher levels of the system.

Conclusion

Mamba4 advances sequence modeling by addressing Transformer limitations with a state space mechanism that enables efficient long-sequence processing. It achieves linear-time inference using recurrent hidden states and input-dependent gating, while still capturing long-range dependencies. Mamba4Rec matches or surpasses RNNs and Transformers in both accuracy and speed, resolving their typical trade-offs.

By combining deep model expressiveness with SSM efficiency, Mamba4 is well-suited for applications like recommendation systems and language modeling. Its success suggests a broader shift toward SSM-based architectures for handling increasingly large and complex sequential data.

Frequently Asked Questions

Q1. What problem does Mamba4 solve compared to Transformers?

A. It overcomes quadratic complexity, enabling efficient long-sequence processing with linear-time inference.

Q2. How does Mamba4 capture long-range dependencies efficiently?

A. It uses recurrent hidden states and input-dependent gating to track context without expensive attention mechanisms.

Q3. Why is Mamba4Rec considered better than RNNs and Transformers?

A. It matches or exceeds their accuracy and speed, removing the typical trade-off between performance and efficiency.

Hello! I’m Vipin, a passionate data science and machine learning enthusiast with a strong foundation in data analysis, machine learning algorithms, and programming. I have hands-on experience in building models, managing messy data, and solving real-world problems. My goal is to apply data-driven insights to create practical solutions that drive results. I’m eager to contribute my skills in a collaborative environment while continuing to learn and grow in the fields of Data Science, Machine Learning, and NLP.

Login to continue reading and enjoy expert-curated content.

Keep Reading for Free