Transformers power modern NLP systems, replacing earlier RNN and LSTM approaches. Their ability to process all words in parallel enables efficient and scalable language modeling, forming the backbone of models like GPT and Gemini.

In this article, we break down how Transformers work, starting from text representation to self-attention, multi-head attention, and the full Transformer block, showing how these components come together to generate language effectively.

How transformers power models like GPT, Claude, and Gemini

Modern AI systems use transformer architectures for their ability to handle large-scale language processing tasks. These models require large text datasets for training because they need to learn language patterns through specific modifications which meet their training needs. The GPT models (GPT-4, GPT-5) use decoder-only Transformers i.e, a stack of decoder layers with masked self-attention. Claude (Anthropic) and Gemini (Google) also use similar transformer stacks, which they modify through their custom transformations. Google’s Gemma models use the transformer design from the “Attention Is All You Need” paper to create text through a process which generates one token at a time.

Part 1: How Text Becomes Machine-Readable

The first step toward transformer operation requires text conversion into numerical form for transformer processing. The process begins with tokenization and embeddings which require conversion of words into distinct tokens followed by conversion of those tokens into vector representation. The system needs positional encodings because they help the model understand how words are arranged in a sentence. In this section we break down each step.

Step 1: Tokenization: Converting Text into Tokens

At its core, an LLM cannot directly ingest raw text characters. Neural networks operate on numbers, not text. The process of tokenization enables the conversion of a complete text string into separate elements which receive individual numeric identifiers.

Why LLMs Cannot Understand Raw Text

The model requires numeric input because raw text exists as a character string. We can’t create a word-to-index mapping system because language contains infinite possible forms through its various tenses and plural forms and through the introduction of new vocabulary. The complete text of raw materials does not contain the necessary numerical framework that neural networks need for their mathematical computations.For example, the sentence: Transformers changed natural language processing

This must first be converted into a sequence of tokens before the model can process it.

How Tokenization Works

Tokenization segments text into smaller sections which correspond to linguistic components. The tokens can represent three different elements which include: words and subwords and characters and punctuation.

For example:

The model uses a unique numerical Id to represent each token which it needs for both training and inference purposes.

Types of Tokens Used in LLMs

Different tokenization strategies exist depending on the model architecture and vocabulary design. The methods include Byte-Pair Encoding (BPE), WordPiece, and Unigram. The methods maintain common words as single tokens while they divide uncommon words into essential components.

The word “transformers” remains whole while “unbelievability” breaks down into “un” “believ” “ability“. Subword tokenization enables models to process new or uncommon terms by using known word components. Tokenizers treat word pieces as basic units and special tokens (like “) and punctuation marks as distinct units.

Step 2: Token Embeddings: Turning Tokens into Vectors

The model uses the acquired tokens to create an embedding vector for each token ID. The token embeddings represent word meaning through the use of dense numeric vectors.

An embedding is a numeric vector representation of a token. You can think of it as each token having coordinates in a high-dimensional space. The word “cat” will map to a vector that exists in 768 dimensions. The model acquires these embeddings through its training process. The tokens which have equivalent meanings produce vectors which show their relationship to one another. The words “Hello” and “Hi” have close embedding values but “Hello” and “Goodbye” show a large distance between their respective embeddings.

What is an Embedding?

The model uses the acquired tokens to create an embedding vector for each token ID. The token embeddings represent word meaning through the use of dense numeric vectors.

An embedding is a numeric vector representation of a token. You can think of it as each token having coordinates in a high-dimensional space. The word “cat” will map to a vector that exists in 768 dimensions. The model acquires these embeddings through its training process. The tokens which have equivalent meanings produce vectors which show their relationship to one another. The words “Hello” and “Hi” have close embedding values but “Hello” and “Goodbye” show a large distance between their respective embeddings.

Read more: A practical guide to word embedding systems

Hi: [0.25, -0.18, 0.91, …], Hello: [0.27, -0.16, 0.88, …]

Like here we can see that the embeddings of Hi and Hello are quite similar. And the embeddings of Hi, and GoodBye are quite distant to each other.

Hi: [0.25, -0.18, 0.91, …], GoodBye: [-0.60, 0.75, -0.20, -0.55]

Semantic Meaning in Vector Space

Embeddings capture meaning which enables us to assess relationships through vector similarity measurements. The vectors for “cat” and “dog” show closer proximity than those for “cat” and “table” because their semantic relationship is stronger. The model discovers word similarity through the initial stage of its processing. A token’s embedding begins as a basic meaning which lacks context because it only shows the specific word meaning. The system first learns basic word meanings through its attention system which brings in context later on. The word “cat” understands its identity as an animal while the word “run” recognizes its function in describing motion.

For example:

- The words king and queen show a pattern of appearing in close proximity.

- The two fruits apple and banana show a tendency to group together.

- The words car and vehicle demonstrate comparable spatial distributions in the environment.

- The spatial structure of the system enables training models to develop understanding of word connections.

Why Similar Words Have Similar Vectors

During training the model modifies its embedding system to create word vector spaces which display words that occur in matching contexts. This phenomenon occurs as a secondary effect of next-word prediction objectives. Through the process of time passage, interchangeable words and related terms develop identical embeddings which enable the model to make broader predictions. The embedding layer learns to represent semantic relationships because it groups synonyms together while creating separate spaces for related concepts. The statement explains why the two words “hello” and “hi” have similar meanings and the Transformers’ embedding method successfully extracts language meaning from fundamental elements.

For example:

The cat sat on the ___ and The dog sat on the ___ .

Because cat and dog appear in similar contexts, their embeddings move closer in vector space.

Step 3: Positional Encoding: Teaching the Model Word Order

A key limitation of the attention systems is that it requires explicit sequence information because they cannot independently determine the order of tokens. The transformer processes the input as a collection of words until we provide positional information for the embeddings. The model receives word order information through positional encoding.

Why Transformers Need Positional Information

Transformers execute their computations by processing all tokens simultaneously, which differs from RNNs that require sequential processing. The system’s ability to process tasks simultaneously results in fast performance, but this design choice prevents the system from understanding order of events. The Transformer would perceive our embeddings as unordered elements when we input them directly. The model will interpret “the cat sat” and “sat cat the” identical when there are no positional encodings present. The model requires positional information because it needs to understand word order patterns that affect meaning.

How Positional Encoding Works

Transformers typically add a positional encoding vector to each token embedding. The original paper used sinusoidal patterns based on token index. The entire sequence requires a dedicated vector which gets added to each token’s unique embedding. The system establishes order through this method: token #5 always receives that position’s vector while token #6 gets another specific vector and so forth. The network receives input through positional vectors which are combined with embedding vectors before entering the system. The model’s attention systems can recognize word positions through “this is the 3rd word” and “7th word” statements.

The first answer states that network input becomes disorganized when position encoding gets removed since all positional information gets erased. Positional encodings restore that spatial information so the Transformer can distinguish sentences that differ only by word order.

Why Word Order Matters in Language

Word order in natural language determines the actual meaning of sentences. The two sentences: “The dog chased the cat” and “The cat chased the dog” demonstrate their basic difference through their different word orders. An LLM system needs to learn about word positions because this knowledge enables it to capture all linguistic details of a sentence. Attention uses positional encoding to gain the capability of processing sequential information. The system enables the model to focus on both absolute and relative position information according to its requirements.

Part 2: The Core Idea That Made Transformers Powerful

The main discovery which enables transformer technology to function is the self-attention mechanism. The mechanism permits tokens to process a sentence by interacting with each other in real time.

Self-attention permits every token to examine all other tokens in the sequence at the same time instead of processing text in a linear fashion.

Step 4: Self-Attention: How Tokens Understand Context

Self-attention functions as the method through which each token in a sequence acquires knowledge about all other tokens. The first self-attention layer enables every token to calculate attention scores for all other tokens in the sequence.

The Core Intuition of Attention

When you begin a sentence, you start reading it and you want to know the connection between the current word and all other words in the sentence. The system produces its output through an attention mechanism that creates a weighted combination of all token representations. Each token decides which other words it needs to understand its own meaning.

For example: The animal didn’t cross the street because it was too tired.

Here, the word “it” most likely refers to ‘animal’, not ‘street’. Here comes the self attention, it allows the model to learn these similar contextual relationships.

Query, Key, and Value Explained Intuitively

The self-attention mechanism requires three vectors for each token which include the query vector and the key vector and the value vector. The system generates these three components from the token’s embedding through learned weight matrices. The query vector functions as a search mechanism which seeks particular information while the key vector provides information about what the word brings to other words and the value vector shows the actual meaning of the word.

- Query (Q): The token uses this element to search for information about its surrounding context.

- Key (K): The system identifies tokens which contain potentially useful data for the current task.

- Value (V): The system uses this element to link specific information for each token in the system.

How Tokens Decide What to Focus On

The process of self-attention generates a matrix that displays attention scores for all possible token pairs. We obtain the query score for each token by calculating its dot product with all other tokens’ keys and then applying softmax to create weight distributions. The system produces a probability distribution that indicates which tokens in the sequence have the highest importance.

The token uses its value vectors from the top tokens to change its own vector. A word such as “it” will exhibit strong attention to the nouns it references within a sentence. Attention scores operate as normalized mathematical dot products that use Q and K values which have undergone softmax transformation. The new representation of each token results from combining different tokens based on their contextual importance.

Why Attention Solved Long-Context Problems

Before the development of Transformers RNNs and CNNs faced challenges with effective long-range context handling. The introduction of Attention allowed every token to access all other tokens without regard to their distance. Self-attention enables simultaneous processing of complete sequences which allows it to detect connections between words located at the start and end of extended text. The ability of attention-based models to comprehend all contextual information enables them to perform well in tasks that require extensive context understanding such as translation and summarization.

Step 5: Multi-Head Attention: Learning Multiple Relationships

Multiple attention heads enable the system to execute multiple attention processes because each head uses its separate Q/K/V projections to perform its tasks. The model can capture simultaneous multiple meanings through this feature.

Why One Attention Mechanism Is Not Enough

The model must use all context from the text through a single attention head which creates one score system. Language exhibits various patterns through its different elements which include syntax and semantics and named entities and coreference. A single head might capture one pattern (say, syntactic alignment) but miss other patterns.

Therefore, multi-head attention uses separate “heads” to process different patterns according to their requirements. Each head develops its own set of queries and keys and values which enables one head to study word order while another head studies semantic similarity and a third head studies specific phrases. The different elements create multiple ways to understand the situation.

How Multiple Attention Heads Work

The multi-head layer projects each token into h sets of Q/K/V vectors, which include one set of vectors for each head. Self-attention calculation occurs through each head which results in h distinct context vectors for every token. The process requires us to link information through either concatenation or addition which we then transform using linear mapping. The result creates multiple attention channels which enhance each token’s embedding. The summary states that multi-head attention uses various attention heads to identify different relationships which exist within the same sequence.

This combined system learns additional information because each head learns its own specific subspace which leads to better results than any single head could achieve. One head might discover that “bank” connects with “money” while another head interprets “bank” as a riverbank. The combined output creates a more detailed token representation of the token. The majority of advanced models implement 16 or higher heads for each layer because this configuration enables them to achieve optimal pattern recognition.

Part 3: The Transformer Block (The Engine of LLMs)

The combination of attention mechanisms with basic feed-forward computations is handled through Transformer blocks which depend on residual connections together with layer normalization as their essential stabilizing mechanisms. The entire system is constructed through the combination of multiple blocks which display this operation. We will analyze a block in this section before we show the reason LLMs require multiple layers.

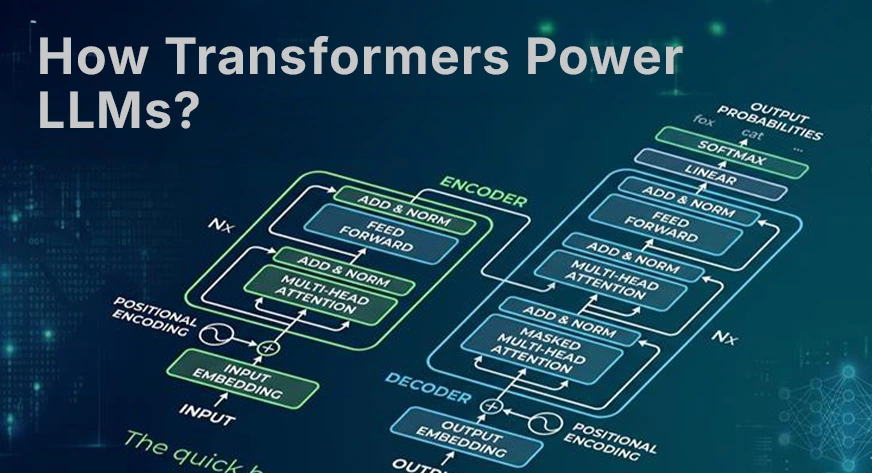

Step 6: The Transformer Decoder Block Architecture

The Transformer decoder block which operates in GPT-style models contains two components: a masked self-attention layer, followed by a position-wise feed-forward neural network. The sublayer contains two components: a “skip” connection which uses residual connections and a layer normalization function. The flowchart shows how the block operates.

Self-Attention Layer

The block’s first major sublayer is masked self-attention. The term “masked” indicates that each token can only attend to preceding tokens because this restriction preserves autoregressive generation. The layer applies multi-head self-attention to every token based on the method which has been explained previously. The system utilizes prior tokens to obtain additional contextual information. The system uses the masked variant for generation purposes while it would use plain self-attention for encoders such as BERT.

Feed-Forward Neural Network (FFN)

Each token vector goes through two separate processes after attention has finished which involves using a universal feed-forward network to process all locations. The system consists of a basic two-layer perceptron which contains one linear layer for dimension expansion, a GeLU or ReLU nonlinearity, and another linear layer for dimension reduction. The position-wise feed-forward network enables the model to execute more extensive changes for each token. It introduces nonlinearity which enables the block to perform calculations that exceed the linear attention combination. The system processes all tokens simultaneously because the feed-forward network operates on each token individually.

Residual Connections

The residual connection exists in every sublayer as its fundamental requirement. We add the layer’s input back to its output. The attention sublayer uses the following operation:

x = LayerNorm(x + Attention(x)); similarly for the FFN: x = LayerNorm(x + FFN(x)).

The skip connections enable smooth gradient flow throughout the network which protects against vanishing gradients in deep network architectures. The network allows people to skip new sublayer changes when their impact on the original signal remains minimal. Residuals enable training of multiple layers because they maintain optimization stability.

Layer Normalization

The system applies Layer Normalization after every addition operation. The process of LayerNorm first standardizes each token’s vector to have a mean of 0 and a variance of 1. The system maintains activation sizes within training limits by using this method. The training process receives stability from the combination of skip connections and the normalization component which forms the Add & Norm block. So, these elements prevent the occurrence of vanishing gradients while they bring stability to the training process. The deep transformer requires these components because otherwise training would become difficult or the system would likely diverge.

Step 7: Stacking Transformer Layers

Modern LLMs contain multiple transformer layers which they arrange in a sequence. Each layer enhances the output that the preceding layer produced. They stack many blocks which usually consist of dozens or greater than that. The system used 12 layers in GPT-2 small while GPT-3 required 96 layers and current models need even higher quantities.

Why LLMs Use Dozens or Hundreds of Layers

The reason is simple; more layers give the model more capacity to learn complex features. Each layer transforms the representation which develops from fundamental embeddings until it reaches advanced high-level concepts. The initial layers of a system identify basic grammar and immediate patterns whereas the later layers develop comprehension of complex meanings and knowledge about the world. The number of layers serves as the main distinction between GPT-3.5 and GPT-4 models because both systems require different quantities of layers and parameters.

How Representations Improve Across Layers

Each layer of the system improves the token embeddings through additional contextual information. After the first layer, each word vector includes information from related words in its attention range. The last layer transforms the vector into a complex representation that conveys complete sentence meaning. The system enables tokens to develop from basic word meanings into advanced deep semantic interpretations.

From Words to Deep Semantic Understanding

A token loses its original word embedding after it completes processing through all system layers. The system now possesses a refined comprehension of the surrounding context. The word “bank” uses an enriched vector which moves toward “finance” when “loan” and “interest” appear first while it moves toward “river” when “water” and “fishing” occur first.

Therefore, the model uses multiple transformer layers as a method to progressively clarify word meanings and solve reference problems while conveying detailed information. The model develops deeper understanding through each successive layer which enables it to produce text that maintains coherence and understands context.

Part 4: How LLMs Actually Generate Text

After all this encoding and context-building, how does an LLM produce words? LLMs operate as autoregressive models since they create output by generating one token at a time through their prediction mechanism which depends on previously generated tokens. Here we explain the final steps: computing probabilities and sampling a token.

Step 8: Autoregressive Text Generation

The model uses autoregressive generation to make predictions about the upcoming token through its continuous forward pass operations.

Predicting the Next Token

The LLM starts its processing when it receives a prompt which consists of a sequence of tokens. The transformer network processes the prompt tokens through its transformer layers. The final output consists of a vector which represents each position. The generation process uses the last token’s vector together with the end-of-prompt token vector. The vector enters the final linear layer which people refer to as the unembedding layer that creates a score logit for every token in the vocabulary. The raw scores show the probability for each token to become the succeeding token.

The Role of SoftMax and Probabilities

The model generates logits which function as unnormalized score values that describe every possible token. The model uses the softmax function to transform these logits into a probability distribution which requires the function to exponentiate all logits before it normalizes them to a total sum of 1.

The softmax function operates by giving greater probability weight to higher logit values while it decreases all other values towards zero. The system provides a probability value which applies to every potential subsequent word. Modern models generate diverse text because they use sampling methods to create controlled randomness from the probability distribution instead of always choosing the most likely word through greedy decoding which results in repetitive and uninteresting content.

Sampling Strategies (Temperature, Top-K, Top-P)

To turn probabilities into a concrete choice, LLMs use sampling techniques:

- Temperature(T): We divide all logits by temperature T before applying the softmax function. The distribution becomes narrower when T value decreases below 1 because the distribution peaks to an extreme point which makes the model select safer and more predictable words. The distribution becomes broader at T values above 1 because it makes uncommon words more possible to appear while creating output that shows more inventive results.

- Top-K sampling: We maintain the top K token choices from our probability ranking after we sort all available tokens. With K set to 50, the system evaluates only the 50 most probable tokens while all other tokens receive zero probability. The K tokens have their probabilities renormalized before we choose one token to sample.

- Top-P (nucleus) sampling: Instead of a fixed K, we take the smallest set of tokens whose total probability mass exceeds a threshold p. If p equals 0.95, we retain the top tokens until their cumulative probability reaches or exceeds 95%. The system considers only “Paris” plus one or two additional options in situations that have high confidence. The capital of France is”), only “Paris” (maybe plus one or two) is considered. The creative environment allows multiple tokens to be part of the process. Top-P adapts to the situation and is widely used (it’s the default in many APIs).

The temperature adjustment and top-K setting and top-P setting control our ability to generate both random and determined outputs. The choices you select in this section determine whether LLM outputs will show exact results or more creative outcomes because different LLM services permit you to adjust these settings.

Why Transformers Scale So Well

There are two primary reasons why transformers scale so well:

- Parallel Processing: Transformers replace sequential recurrence with matrix multiplications and attention, allowing multiple tokens to be processed at once. Unlike RNNs, they handle entire sentences in parallel on GPUs, making training and inference much faster.

- Handling Long Context: Transformers use attention to connect words directly, letting them capture long-range context far better than RNNs or CNNs. They can handle dependencies across thousands of tokens, enabling LLMs to process entire documents or conversations.

Conclusion

Transformers have fundamentally reshaped natural language processing by enabling models to process entire text sequences and capture complex relationships between words. From tokenization and embeddings to positional encoding and attention mechanisms, each component contributes to building a rich understanding of language.

Through transformer blocks, these representations are refined using attention layers, feed-forward networks, residual connections, and normalization. This pipeline enables LLMs to generate coherent text token by token, establishing transformers as the core foundation of modern AI systems such as GPT, Claude, and Gemini.

Frequently Asked Questions

Q1. How do transformers help LLMs understand language?

A. Transformers use self-attention and embeddings to capture context and relationships between words, enabling models to process entire sequences and understand meaning efficiently.

Q2. Why are transformers better than RNNs and LSTMs?

A. Transformers process all tokens in parallel and handle long-range dependencies effectively, making them faster and more scalable than sequential models like RNNs and LSTMs.

Q3. How do LLMs generate text using transformers?

A. LLMs predict the next token using probabilities from softmax and sampling methods, generating text step by step based on learned language patterns.

Hello! I’m Vipin, a passionate data science and machine learning enthusiast with a strong foundation in data analysis, machine learning algorithms, and programming. I have hands-on experience in building models, managing messy data, and solving real-world problems. My goal is to apply data-driven insights to create practical solutions that drive results. I’m eager to contribute my skills in a collaborative environment while continuing to learn and grow in the fields of Data Science, Machine Learning, and NLP.

Login to continue reading and enjoy expert-curated content.

Keep Reading for Free