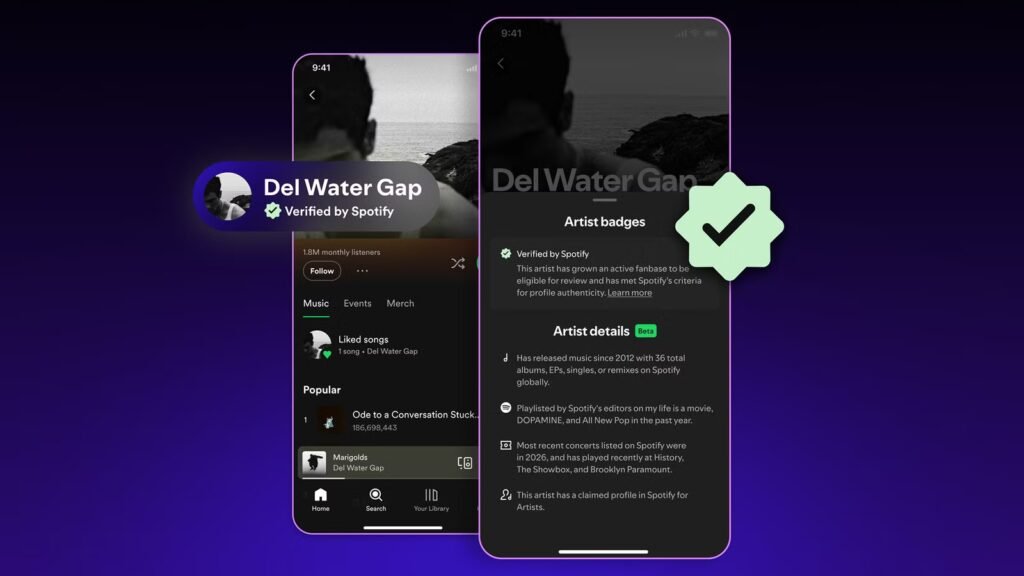

If you’re worried that the new single from a favorite musician might just be a dupe from an AI band, Spotify might have your back. The streaming music service is introducing a Verified by Spotify badge that proves an artist isn’t AI-generated or an AI persona.

Spotify will not only check that artists honor its rules, but monitor their activity for signs there are real humans involved. The company will look for presences both on and off the service, such as concerts and social media accounts. It will also look for “consistent” listener involvement — a one-time stream surge could indicate an attempt to game the system with AI-made tracks.

Subscription with ads

No ads on any paid plan

Price

Starting at $11.99/month, or $5.99/month for students

The verification process will include human reviews, not just automated signal flags. The aim is to label real people “behaving in good faith,” Spotify says. Badges should reach profiles and artist names in the “coming weeks,” and Spotify stresses that the absence of a badge doesn’t mean someone is an AI fake. Over 99 percent of artists will eventually be verified, the company says.

You’ll also have ways to identify authentic musicians yourself. All profiles will show release activity, touring, and other major career markers that show it’s the real artist. The new section is a beta release, but will show in the About section of mobile apps in the weeks ahead. You can also see it by tapping the “Verified by Spotify” text in banners for authenticated creators.

Spotify’s latest move to fight AI music slop

The problem is industry-wide

The Verified by Spotify badge isn’t the service’s first effort to limit AI-generated slop on its platform. In September 2025, Spotify implemented stricter impersonation rules, put in spam filters, and said it would co-develop an industry standard (DDEX) for AI disclosures. SongDNA, introduced in March, lets you learn about everyone involved in a song, such as writers and guest singers.

Related

Why Are AI Song Covers So Popular on YouTube?

Bopping to AI-generated song covers was not on my 2024 bingo card

At the time, Spoitify music head Charlie Hellman noted that the service had already pulled over 75 million “spammy tracks” in the space of a year, and that AI was mainly “accelerating” the problem. Simultaneously, he made clear that Spotify is content with AI that’s used “authentically and responsibly.” Artists who selectively use AI, such as electronic music pioneer Holly Herndon, are still allowed.

This newest move nonetheless underscores how numerous streaming services, not just Spotiy, have fought to keep dishonest AI music under control. Deezer, for instance, said in September 2025 that 28 percent of daily uploads to its service were AI-generated, but that these tunes only represented 0.5 percent of streams. Apple said in January that year that under one percent of Apple Music streams came from AI-generated content, but it’s not clear how that has changed.

Will the AI music problem get better, or worse?

It’s not certain that the Verified by Spotify badge and review processes will turn things around. AI music generation is becoming more sophisticated, and the tool creators don’t always put guardrails in place to limit abuse. Google Gemini has restrictions on length and copying artists’ exact styles, but others don’t.

As such, it’s increasingly easy for spammers to create plausible-sounding AI songs that fool listeners for just long enough to generate revenue. Technologies like DDEX might be more important than badges as they’ll do more to block spam from reaching music platforms in the first place.